Polymarket Twin Engine

Deterministic trading infrastructure for prediction markets.

Built as a unified execution engine where live trading and historical replay run through the exact same runtime, ensuring consistent strategy behavior and reproducible results.

- Tick-level order book reconstruction

- Shared execution pipeline (live & replay)

- Deterministic portfolio & PnL accounting

Project Summary

A trading infrastructure platform built around one architectural constraint: live execution and backtesting must share a single execution path. No environment flags, no conditional branches - the same StrategyRunner, OrderManager, and Portfolio logic processes every event in both modes.

This constraint shaped every design decision. Market data is persisted as raw, ordered events in Parquet so replay can reconstruct exact book state. Strategies are schema-validated and plugin-driven so they remain portable across environments. Fills are latency-aware so backtest results reflect real execution dynamics. The result is a full research-to-production platform, not a trading bot with a backtest mode bolted on.

🚩 Problem

No backtesting infrastructure exists for Polymarket - strategies had to be tested live with real capital, with no way to validate behavior before deployment.

The gap isn't just tooling:

- No existing solution: Polymarket has no backtesting system. Every strategy iteration required real money and real risk.

- Unique market mechanics: binary outcomes, merge/redemption settlement, and time-bounded markets don't fit traditional trading tools.

- No historical replay capability: without tick-level order book data and deterministic replay, strategy performance can't be measured or reproduced.

- Unverifiable execution quality: simplified fill assumptions hide the spread, latency, and queue dynamics that determine real trading outcomes.

Building a backtesting engine for prediction markets meant solving all of these from scratch.

🛠 Solution

Rather than adapting traditional backtesting tools, the architecture was designed specifically for prediction markets with one core constraint: live trading and historical replay must run through the same execution pipeline.

- Unified execution engine: one StrategyRunner, one OrderManager, one Portfolio. The pipeline consumes events from either a live data source or a historical replay. Downstream logic doesn't know the difference.

- Tick-level order book reconstruction: every raw CLOB event is recorded with strict ordering and timestamps, then replayed to reconstruct exact book state for realistic strategy evaluation.

- Latency-aware fill model: execution simulates the real delay between order intent and exchange visibility, closing the gap between simulated and actual fill behavior.

- Full prediction market settlement: portfolio accounting handles the complete market lifecycle including binary resolution, merge, and redemption, so PnL is fully resolved regardless of outcome.

The key insight was that execution determinism isn't a feature you add - it's an architectural constraint you design around from the start.

📐 System Architecture

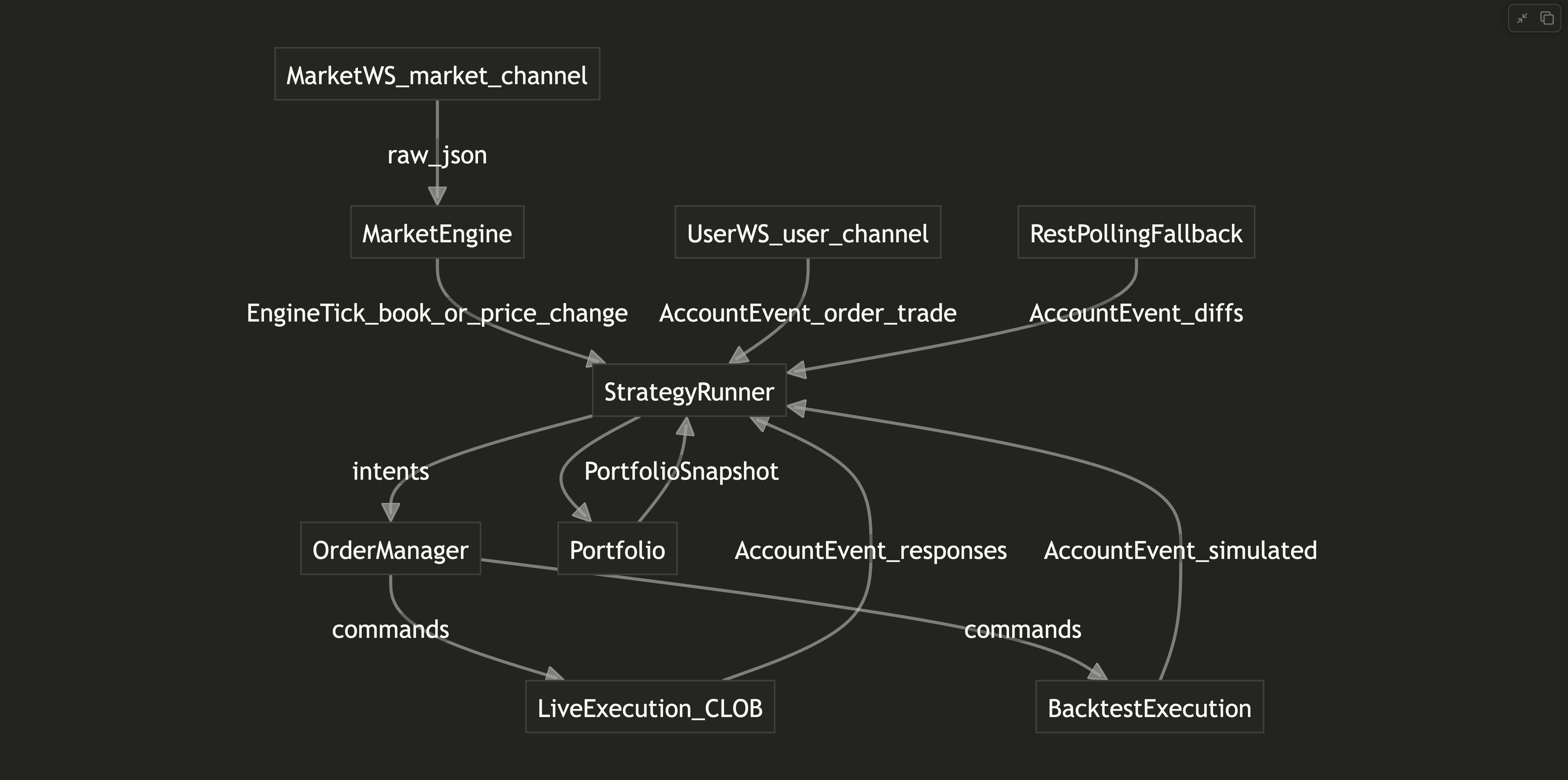

The system is structured as a layered event-driven pipeline. Each layer has a single responsibility and communicates through a shared event model:

- Ingestion layer: subscribes to Polymarket's CLOB WebSocket, persists every raw event with strict ordering, and handles window rotation and disconnect markers for dataset integrity.

- Order book layer: reconstructs full book state tick-by-tick from the raw event stream. Operates identically on live and historical data.

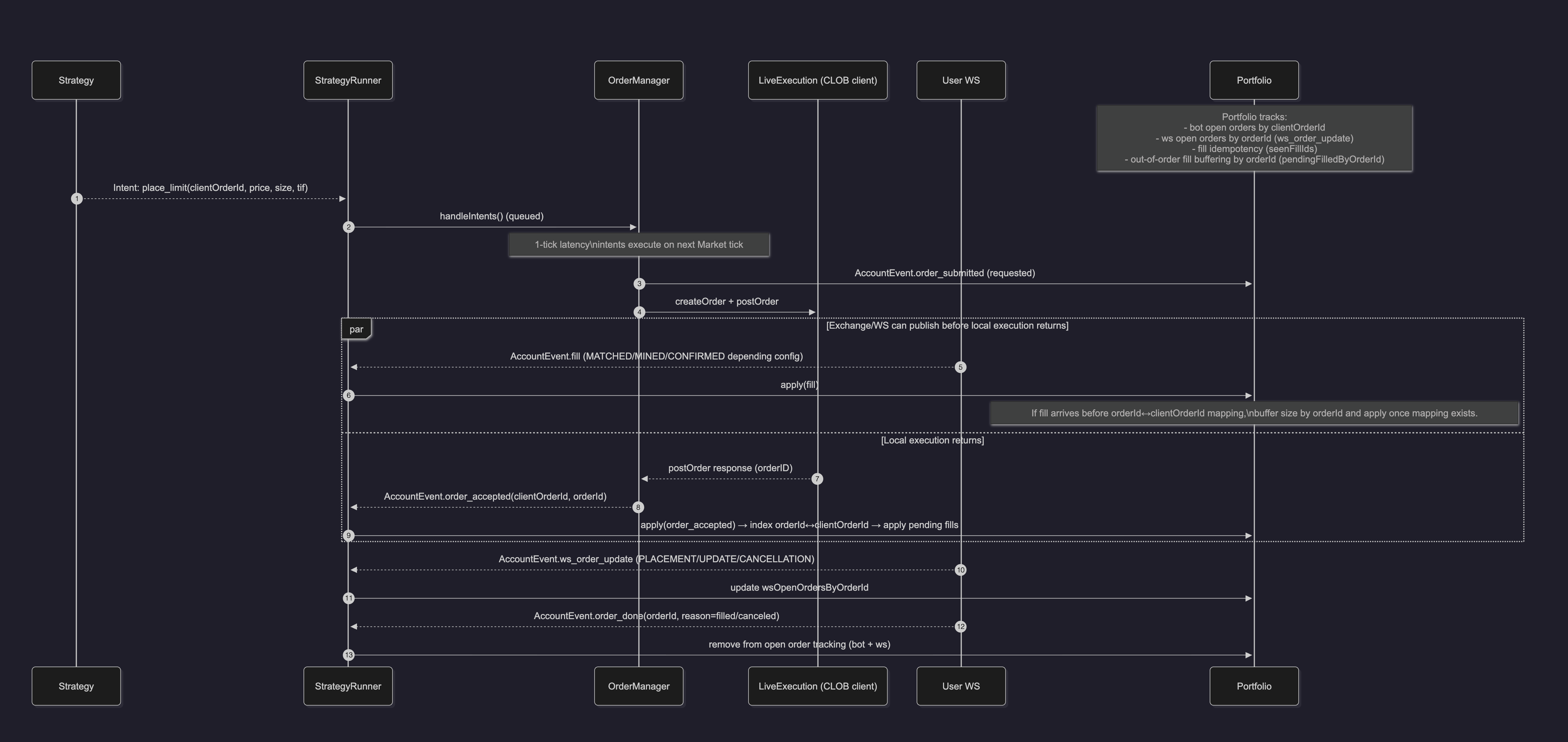

- Execution layer: the core event loop. Processes market ticks, invokes strategy evaluation, and routes orders through the OrderManager with full lifecycle tracking (intent queue, cancel/replace, status reconciliation).

- Strategy layer: modular, schema-validated strategies registered with typed parameters. Supports an event-driven plugin system for computed signals and external data without coupling strategies to specific data sources.

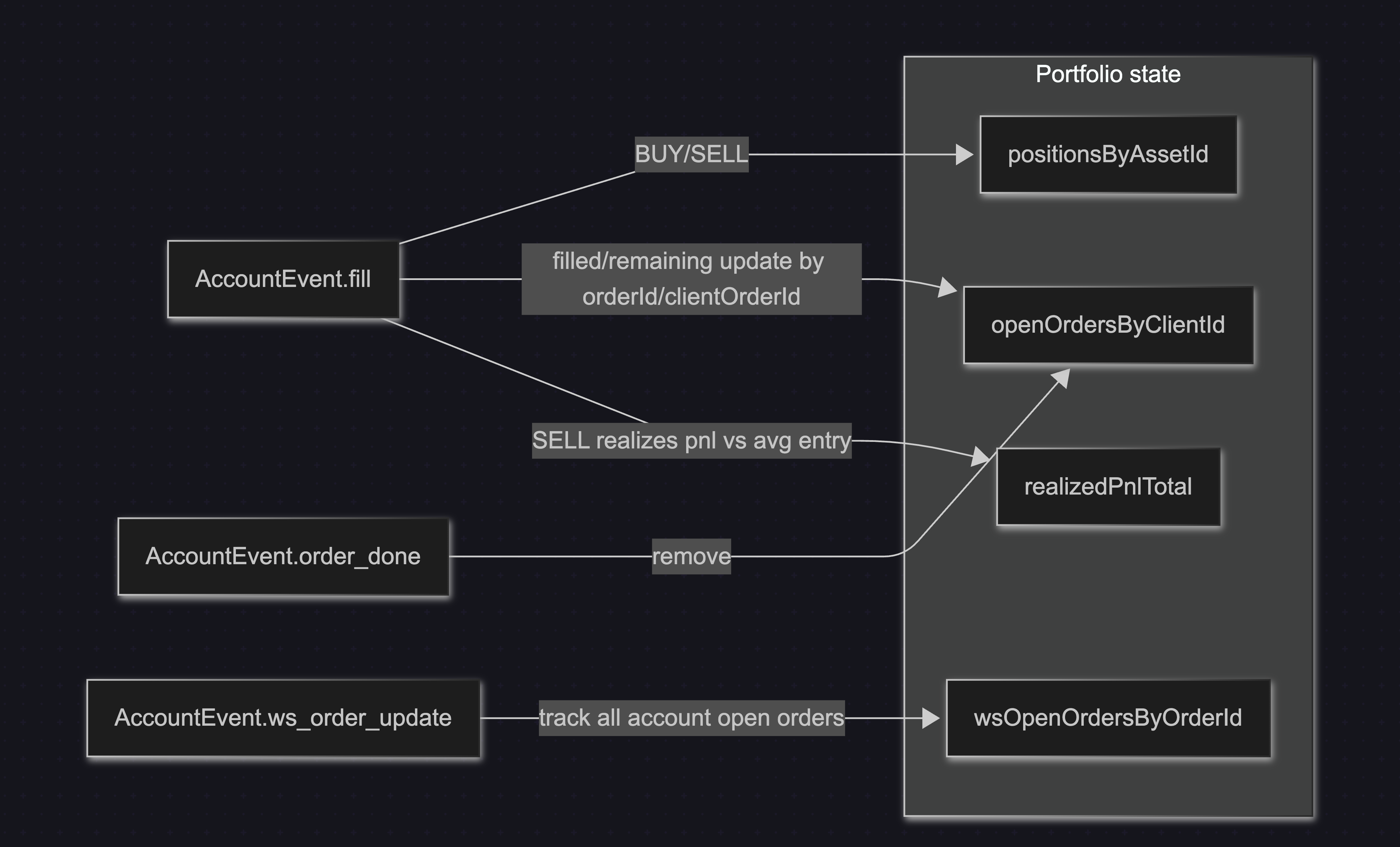

- Portfolio layer: tracks positions, open orders, fills, and realized PnL as a deterministic state machine. Environment-agnostic, including end-of-market settlement.

- Storage layer: structured historical storage with per-window file rotation and explicit disconnect markers, so replays can distinguish between missing data and market silence.

The layers compose into the same pipeline in every mode. Swapping the data source is the only difference between live and replay.

🧠 Engineering Highlights

- Replay fidelity: tick-by-tick engine that reconstructs full order book state from raw WebSocket events, producing exact historical simulation down to individual book updates.

- Extensible signal model: plugin architecture for attaching external data feeds (Binance klines, Deribit DVOL, custom indicators) as cached snapshots. Strategies consume signals through a uniform interface without knowledge of the data source.

- Execution realism: latency-aware fill simulation that models intent-to-visibility delay, preventing the optimistic fill assumptions that inflate backtest performance.

- Configuration safety: schema-validated strategy registration that enforces parameter types and constraints at load time, eliminating silent misconfiguration.

- Systematic optimization: batch analytics and grid search tooling for evaluating strategies across parameter spaces, with feature export pipelines for offline analysis.

🔥 Metrics & Impact

- Achieved 99.6% behavioral parity between live and backtest: identical strategy outputs given identical input data.

- Order-to-book latency p95 ~90ms: submit → exchange acknowledgment → order book visibility.

- 200M+ external market events: ingested and persisted daily (crypto feeds).

- Parallel backtesting: across multiple markets with parameter grid search for accelerated strategy evaluation.

- Live deployment: automated strategies executing in production with realized PnL tracking and full market settlement.

👤 My Role

Designed and built the entire platform end-to-end:

- Architecture: defined the single-pipeline constraint and layered event-driven design that ensures execution determinism across environments.

- Data infrastructure: built the ingestion pipeline, storage layer, and replay engine with tick-level order book reconstruction.

- Execution system: implemented the live trading engine including order management with risk limits, cancel/replace logic, and out-of-order fill handling.

- Strategy platform: designed the modular framework, plugin system, and schema validation layer for portable, reproducible strategy development.

- Research tooling: built batch analytics, grid search, and feature export pipelines for systematic strategy evaluation.

- Operations: created CLI tooling for key management, balance checks, approvals, and multi-bot deployment workflows.

Industry

Project Type

Scope of Work

Core Technologies

Core Design Decisions

Backtesting System

The backtesting engine replays recorded market data tick-by-tick, reconstructs the order book exactly as it appeared live, and runs the same StrategyRunner/OrderManager/Portfolio logic used in production. It supports latency simulation (intent -> exchange visibility) to better match real fills and includes market-end settlement logic (merge/redeem) so PnL is fully accounted for per market. This makes research outcomes far more realistic and reduces the typical live-vs-backtest gap.

Live Data Recording & Replay

The system resolves the current markets, subscribes to the CLOB market WebSocket, and persists every raw event to Parquet with strict ordering and timestamps. Those files are rotated per window and include explicit disconnect markers, making the dataset auditable and safe to replay deterministically.

Strategy Framework

Strategies are modular and registered with schema-validated parameters, so live runs and backtests are consistent and reproducible. The framework is event-driven (market ticks + account events), and it supports optional plugins to attach computed signals without changing core strategy code.

Strategy Plugins

The execution engine includes a deterministic plugin layer that updates once per market tick and exposes cached snapshots to strategies. Plugins provide computed signals and external data while preserving identical runtime behavior across live and replay environments.

- 100+ Technical Indicators: ATR, ADX, Bollinger Bands, realized volatility, and session metadata from external market data.

- Rolling Volatility & Range Stats: window-based price movement and dispersion metrics per asset.

- Time & Price Gates: configurable time-window trading controls and dwell constraints.

- External Volatility Feeds: integrated sources such as Deribit DVOL aligned to market lifecycle.

- External Data Requests: declarative external feed integration fulfilled at runtime.

Execution & Risk Management

All orders flow through a central OrderManager that enforces risk limits and manages lifecycle (intent queue, cancel/replace, order status). Execution is abstracted so the same logic works in live trading and in backtests, with consistent handling of out-of-order fills.

Portfolio & PnL Accounting

A deterministic portfolio layer tracks positions, open orders, fills, and realized PnL. It is event-driven and consistent across live and backtest, including end-of-market settlement for complete PnL reporting.

Research & Optimization

The project includes batch-level analytics, grid search tooling, and feature export pipelines so strategies can be tuned and evaluated at scale. This makes it a full research system, not just a trading bot.

Operations & Monitoring

The system supports multi-bot deployments with per-bot configs and a lightweight monitoring UI. It also includes CLI tooling for key management, balance checks, approvals, and automation around Polymarket operational workflows.